I've always been fascinated by floppy disks from the crazy stories of Steve Wozniak designing the Disk II controller using a handful of logic chips and carefully-timed software to the amazing tricks to create - and break - copy protection recently popularised by 4am.

I'm going to be writing a few articles about data preservation and copy protection but first we need a short primer.

Media sizes

There were all sorts of attempts at creating sizes but these were the major players:

- 8" - The grand-daddy of them all but not used on home PC's. They did look cool with the IMSAI 8080 in Wargames tho.

- 5.25" - 1976 saw the 5.25" disk format appear from Shugart Associates soon to be adopted by the BBC Micro and IBM PC with 360KB being the usual capacity for double-sided disks.

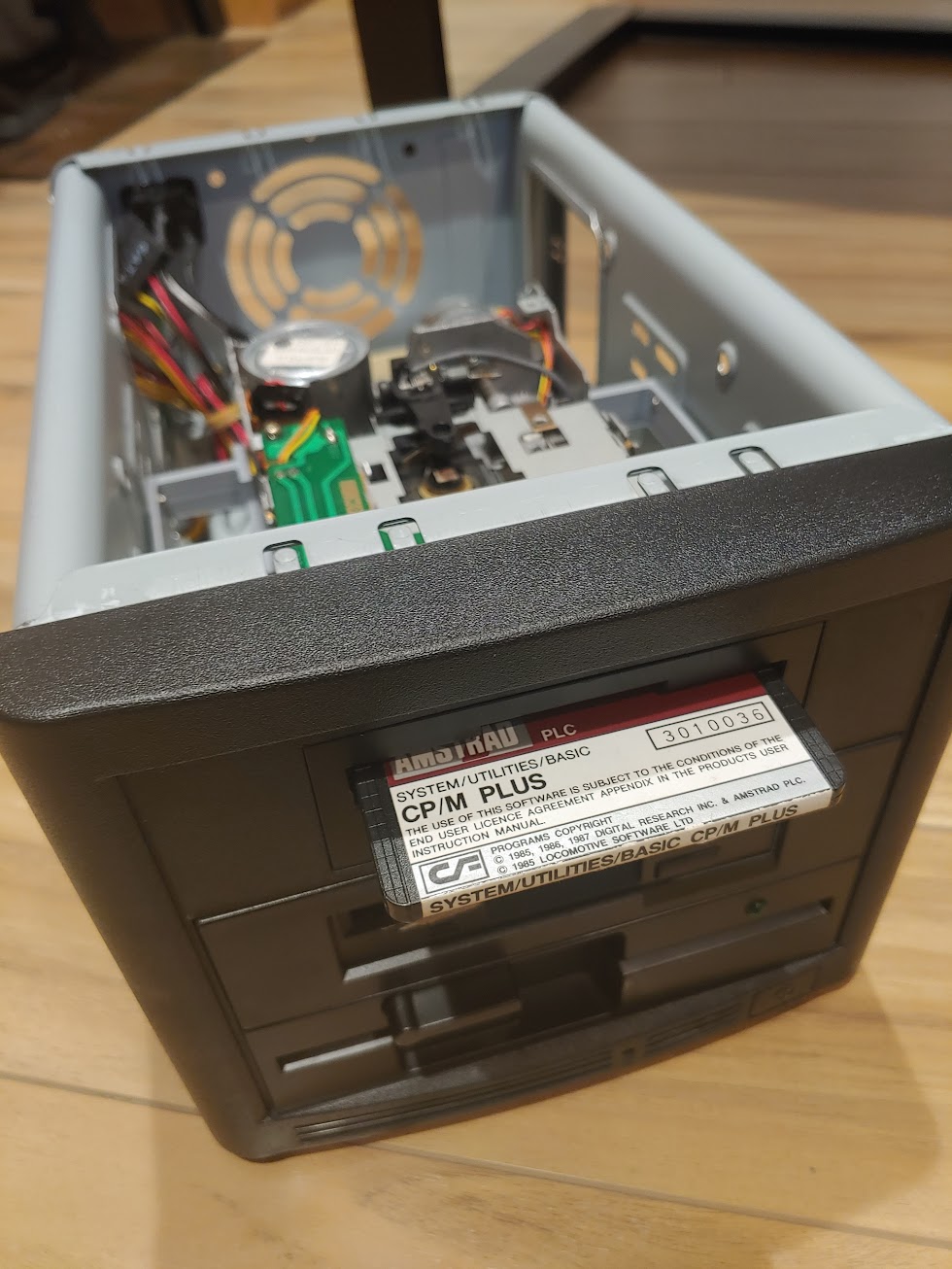

- 3" - Hitachi developed the 3" drive which saw some 3rd-party solutions before being adopted by the Oric and Tatung Einstein. Matsushita licenced it's simpler cheaper version of the drive to Amstrad where it saw use on the CPC, PCW and Spectrum +3.

- 3.5" - Sony developed this around 1982 and it was quickly adopted by the PC, Mac, Amiga, Atari ST and many third-party add-ons.

Sides

Almost all disks are double-sided but many drives are single-sided to reduce manufacturing costs. In the case of 5.25" disks some were made with the ability to be flipped or kits to turn them into flippable disks. The 3" disk had this built in. Double-sided drives write to both using two heads while single-sided drives just require you flip them.

An interesting artifact of this is while you could read single-sided disks in a double-sided drive by flipping it as usual a single-sided drive has challenges with disks written by a double-sided drive.

While they can be physically read the data is effectively backwards due to the head underneath seeing the drive rotate in the opposite direction. Flux-level imagers can read these and theoretically invert the image to compensate. Computers back in the day had little chance. There is also the complication that most formats interleave the data between the heads for read speed rather than writing one side then the other. Long answer short: Read double-sided disks on a double-sided drive.

Tracks

Each side of the disk has the surface broken down into a number of rings known as tracks that start at track 0 on the outside and work their way in. 40 tracks is typical in earlier lower-density media and 80 in higher density depending on both the drive itself and the designation of the media.

Some disks provided an extra hole for the drive itself to be able to identity if it was high density or not while others like Amstrad's 3" media simply had a different colored label while the media itself was identical.

Some custom formats and copy protection systems pushed this number up to 41 or 42 tracks so it's always worth imaging at least one extra track to make sure it's unformatted and you're not losing anything. Additionally some machines like the C64 used fewer tracks - 35 -

Sectors

Finally we have sectors which are segments of a track. Typically a disk will have 9 or 10 sectors all the same size but some machines have more or less. Each sector is typically a power of 2 in length - 128 bytes through 1024 bytes (1KB) is typical although some copy protection pushes this higher. Each sector has an ID number and while they might be numbered sequentially they are often written out-of-order to improve speed where the host machine can't process the read before the next sector whizzes by. By writing the sector out of order we can optimize them at least for the standard DOS/OS that will be processing them in a technique called interleaving.

Encoding

Floppy disks themselves can store only magnetic charges that are either on or off. You might imaging the computer would map binary 1's to a magnetic charge and a 0 to no charge but this immediately causes problems:

- Timing drives rotate the disk at slightly different speeds and too infrequent changes in the data will mean we lose sync

- Strong bits too many on-bits together will cause a strong magnetic charge that will leak over to neighbouring areas

- Weak bits too many off-bits together will leave such a weak magnetic charge we will pick up background noise

In order to avoid these problems encoding schemes map the computers binary 1's and 0's into on-disk sequences. Two simpler-to-explain ones include:

- FM - Stores

0as10and1as11on the disk which gives giving 50% efficiency - GCR - Stores a nibble (4 bits) as one of the 16 approved 5-bit sequences on-disk giving 80% efficiency

Other schemes use different tables or invert bit sequences (in the case of MFM which is the most popular) to ensure that every flux transition is wider apart meaning you can actually write the data at twice the density and still be within the tolerances of the disk head's ability to spot transitions.

Controller

Off-the-shelf controller chips added a cost to a disk system and so some systems - notably the Apple ][ and Amiga - performed it using their own custom logic and software. This gave way to some interesting disk formats and incredible copy-protection mechanisms.

Meanwhile companies like Western Digital and NEC produced dedicated floppy controller chips such as the WD1770 (BBC Master), WD1772 (Atari ST), WD 1793 (Beta Disk), VL1772 (Disciple/+D) and the NEC 765A (Spectrum, Amstrad) which trade that flexibility for some simplicity of integration.

Finally there were general-purpose processors which were repurposed for controlling the floppy such as the Intel 8271 (BBC Micro) or MOS 6502 (inside the Commodore 64's 1541 drive).

Copy protection

Many people think computers are all digital and so the only way to protect information is via encryption and obfuscation. While both techniques are used in copy protection the floppy disks themselves existing in our analogue world are open to all sorts of tricks to make things harder to copy from exploiting weak bits to creating tracks so long they wrap back onto themselves etc. Check out Poc || GTFO issue 0x10 for some of the crazy techniques on the Apple ][.

0 responses